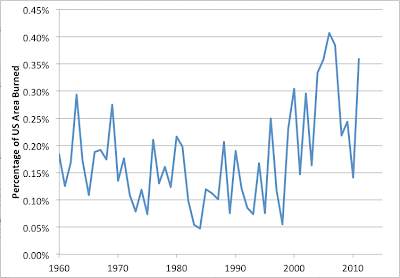

I recently stumbled across statistics for the total acreage in the United States consumed by fire each year since 1960. Graphed over time, the data look as follows:

Here I have expressed the acreage burned as a percentage of the total area of the United States. Notice the pattern of fire decreasing through the 1960s and 1970s and then increasing fitfully from there.

That reminded me loosely of the PDSI data for the US:

Note how the US became wetter after the 1960s and then drier after the 1990s. And of course, it makes sense that drier conditions would lead to more and bigger fires.

To make a closer comparison I took the PDSI data for the contiguous US averaged over June-August and then made a scatter plot of the fire area versus PDSI:

As you can see - there's definitely a relationship with around three times more burned in the driest years than the wettest years. However, it's far from the only thing going on with substantial scatter away from any simple relationship. Probably much of the variation is caused by regional fluctuations - one half the country very dry and the other half very wet will result in a lot more fires than everywhere uniformly normal, but the national average PDSI will be about the same. The PDSI may also not capture the fire-relevant sense of "drought" perfectly.

The exponential trendline shown had a bit better fit than a straight line (R2 of 33% vs 28%) but I wouldn't necessarily have high confidence in extrapolating it very far. Still it seems clear that if the US continues to dry out, there will be a lot more acreage burned in fires.

A note for the especially statistically cautious or skeptical amongst you; the original data source for the fire burned area warns us as follows:

Prior to 1983, sources of these figures are not known, or cannot be confirmed, and were not derived from the current situation reporting process. As a result the figures above prior to 1983 shouldn't be compared to later data.To assess this, I also tried the plot and fits with the pre-1983 and post-1983 data separately:

The fitted trendlines are pretty similar so I feel comfortable that, whatever issues in reporting there may have been prior to 1983, it's fine to combine all the data for this purpose. Note that the post-1983 data have four years more dry than any year in 1960-1982 and these were all bad fire years.

This also tends to rule out the idea that the upsurge in fires in the last decade or two is primarily due to changes in fuel load because of past fire suppression. That would show up as a shift upwards in the fire/drought relationship with more fire for the same amount of drought in the post 1983 data: that doesn't seem to be the case (the blue line is slightly steeper than the red one, but only slightly and there's no evidence of upward shift overall).

Finally, it's worth musing on the implications of this picture:

Climate models suggest that in thirty years the new normal in the US will be about PDSI of -4 on the historical scale. So if we extrapolate the relationship from earlier, and figure that in 30 years we'll be running from -9 to +1, instead of -5 to +5, then we'd be in the region of the graph either side of the red line:

Again, this assumes the extrapolation is valid, and it's probably only roughly so. There will be a lot more fires. Not raging inferno across the whole country, but something like 50-75% more fire area burned per year than during past decades (with large variations between good and bad years, as now).

Of course, if we don't start making much more serious efforts, climate change won't be stopping or slowing down in the 2030s, and we'll be heading further and further off the left side of that graph as the century goes on.

4 comments:

Hi Stuart. This is a great post.

I would imagine that at some point the acreage burned numbers will begin to decline because previous burn scars will interfere with the ability of future fires to spread, thus the fires may actually self-limit themselves over time. In some places it may already be happening, which could begin to skew the numbers.

George Wuerthner (ecologist) has said previously that we are only good at suppressing wildfires during wet times. During dry times we either don't have much effect on them or, in some cases, we make them much worse through firefighting tactics like backburning. I would imagine that the net effect of suppression has been to amplify the warm-wet period from the 1970s thru 1990s, although probably not as much as we'd like to think we have.

Like you, George has observed that fuel loading isn't the primary driver behind larger wildfires - extreme weather is, i.e. drought and wind events. George is a fascinating guy, kind of a thorn in the side of the forest and fire management leadership who are pushing very hard for more intensive management of western forests. His observations about the recent Colorado wildfires and the subdivisions that burned were interesting to say the least. Anyone who the establishment goes out of their way to marginalize is probably worth listening to.

Anyway, great post.

In A Great Aridness William deBuys makes the same point about the relationship between drought and size of wildfires. He argues that warming has several effects that lead to bigger fires: a constant level of precipitation is relatively drier in a warmer climate, early snowmelt changes the pattern of when moisture is available, heat and drought put greater overall stress on trees, and increased amounts of beetle kill. The last point doesn't get enough attention. The Rocky Mountains have been set up for some truly staggering fires in future years; for example, Colorado, Wyoming and Montana each have more than two million acres of beetle-killed timber, much of it terrain too rugged to be rehabilitated.

Thank you for the post.. it offers a great perspective. However, I just want to point out that it does fail to take into account many outside factors. One in particular that bugs me is man-made fires or fires started by man. Especially due to the very nature of terrorism threats now and in the future.

I could fathom we may see more such fires being started by man and this would obviously skew the numbers.

If you find time or are interested, I would love to see this data with man-created fires data removed.

Holy Smokes!

Post a Comment